Legal

Computer Systems Security: Planning for Success by Ryan Tolboom is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License, except where otherwise noted.

All product names, logos, and brands are property of their respective owners. All company, product and service names used in this text are for identification purposes only. Use of these names, logos, and brands does not imply endorsement.

Images used in this text are created by the author and licensed under CC-BY-NC-SA except where otherwise noted.

Acknowledgements

This material was funded by the Fund for the Improvement of Postsecondary Education (FIPSE) of the U.S. Department of Education for the Open Textbooks Pilot grant awarded to Middlesex College for the Open Textbook Collaborative.

The author would like to thank New Jersey Institute of Technology (NJIT) Open Access Textbooks (OAT) project and the Open Textbook Collaborative Project (OTC) for making this text possible.

The author would also like to acknowledge the contributions and hard work of:

-

Karl Giannoglou, University Lecturer NJIT

-

Jacob Jones, NJIT 2023

-

Raymond Vasquez

-

Justine Krawiec, Instructional Designer NJIT

-

Alison Cole, Computing & Information Technology Librarian Felician University

-

Ricky Hernandez, NJIT 2024

-

Jake Caceres, NJIT 2024

Instructional Notes

The text, labs, and review questions in this book are designed as an introduction to the applied topic of computer security. With these resources students will learn ways of preventing, identifying, understanding, and recovering from attacks against computer systems. This text also presents the evolution of computer security, the main threats, attacks and mechanisms, applied computer operation and security protocols, main data transmission and storage protection methods, cryptography, network systems availability, recovery, and business continuation procedures.

Learning Outcomes

The chapters, labs, and review questions in this text are designed to align with the objectives CompTIA Security+ SY0-601 exam. The objectives are reproduced here for reference:

-

1.1 Compare and contrast different types of social engineering techniques.

-

1.2 Given a scenario, analyze potential indicators to determine the type of attack.

-

1.3 Given a scenario, analyze potential indicators associated with application attacks.

-

1.4 Given a scenario, analyze potential indicators associated with network attacks.

-

1.5 Explain different threat actors, vectors, and intelligence sources.

-

1.6 Explain the security concerns associated with various types of vulnerabilities.

-

1.7 Summarize the techniques used in security assessments.

-

1.8 Explain the techniques used in penetration testing.

-

2.1 Explain the importance of security concepts in an enterprise environment.

-

2.2 Summarize virtualization and cloud computing concepts.

-

2.3 Summarize secure application development, deployment, and automation concepts.

-

2.4 Summarize authentication and authorization design concepts.

-

2.5 Given a scenario, implement cybersecurity resilience.

-

2.6 Explain the security implications of embedded and specialized systems.

-

2.7 Explain the importance of physical security controls.

-

2.8 Summarize the basics of cryptographic concepts.

-

3.1 Given a scenario, implement secure protocols.

-

3.2 Given a scenario, implement secure network architecture concepts.

-

3.3 Given a scenario, implement secure network designs.

-

3.4 Given a scenario, install and configure wireless security settings.

-

3.5 Given a scenario, implement secure mobile solutions.

-

3.6 Given a scenario, apply cybersecurity solutions to the cloud.

-

3.7 Given a scenario, implement identity and account management controls.

-

3.8 Given a scenario, implement authentication and authorization solutions.

-

3.9 Given a scenario, implement public key infrastructure.

-

4.1 Given a scenario, use the appropriate tool to assess organizational security.

-

4.2 Summarize the importance of policies, processes, and procedures for incident response.

-

4.3 Given an incident, utilize appropriate data sources to support an investigation.

-

4.4 Given an incident, apply mitigation techniques or controls to secure an environment.

-

4.5 Explain the key aspects of digital forensics.

-

5.1 Compare and contrast various types of controls.

-

5.2 Explain the importance of applicable regulations, standards, or frameworks that impact organizational security posture.

-

5.3 Explain the importance of policies to organizational security.

-

5.4 Summarize risk management processes and concepts.

-

5.5 Explain privacy and sensitive data concepts in relation to security.

Example Schedule

A sample schedule utilizing these resources in a 15 week semester is shown below:

| Week | Chapters | Assignments | Learning Outcomes |

|---|---|---|---|

1 |

1.1, 1.2, 1.6, 2.7 |

||

2 |

1.2, 1.3, 1.6, 2.1, 2.4, 2.5, 2.8, 3.9 |

||

3 |

1.2, 1.3, 1.4, 2.5, 4.1, 4.3, 4.5 |

||

4 |

1.3, 1.6, 1.7, 3.1, 3.2, 4.1 |

||

5 |

Quiz 1 |

1.2, 1.3, 1.4, 1.8, 3.3, 3.4, 4.1, 4.2 |

|

6 |

Midterm Review |

1.1, 1.2, 1.3, 1.4, 1.5, 1.6, 1.7, 1.8, 2.1, 2.4, 2.5, 2.7, 2.8, 3.1, 3.2, 3.3, 3.4, 3.8, 3.9, 4.1, 4.2, 4.3, 4.5 |

|

7 |

Midterm |

1.1, 1.2, 1.3, 1.4, 1.5, 1.6, 1.7, 1.8, 2.1, 2.4, 2.5, 2.7, 2.8, 3.1, 3.2, 3.3, 3.4, 3.8, 3.9, 4.1, 4.2, 4.3, 4.5 |

|

8 |

3.1, 3.2, 3.3, 3.6, 4.1, 4.2 |

||

9 |

2.1, 2.2, 2.4, 2.7, 3.3, 3.4, 3.8, 5.1 |

||

10 |

5.1, 5.2, 5.3, 5.4, 5.5 |

||

11 |

1.2, 1.3, 1.4, 1.7, 1.8, 2.3, 2.5, 3.1, 3.2, 3.3, 3.4, 4.1, 4.2, 4.3, 4.4, 4.5, 5.3, 5.4, 5.5 |

||

12 |

2.3, 3.6, 3.6 |

||

13 |

Mobile Solutions |

Quiz 2 |

3.5 |

14 |

Final Review |

1.2, 1.3, 1.4, 1.7, 1.8, 2.1, 2.2, 2.3, 2.4, 2.5, 2.7, 3.1, 3.2, 3.3, 3.4, 3.5, 3.6, 3.7, 3.8, 4.1, 4.2, 4.3, 4.4, 4.5, 5.1, 5.2, 5.3, 5.4, 5.5 |

|

15 |

Final Exam |

1.2, 1.3, 1.4, 1.7, 1.8, 2.1, 2.2, 2.3, 2.4, 2.5, 2.7, 3.1, 3.2, 3.3, 3.4, 3.5, 3.6, 3.7, 3.8, 4.1, 4.2, 4.3, 4.4, 4.5, 5.1, 5.2, 5.3, 5.4, 5.5 |

1. Introduction

1.1. Managing Risk

Information security (infosec) is largely the practice of preventing unauthorized access to data. Unauthorized access is when an actor gains access to data that they do not have the permissions to access. The system is often used in an unintended manner to provide such access. Data has become an increasingly valuable asset and the risks of others having access to data are incredibly high. Because of this, information security typically falls under the risk-management plan of a company and its importance cannot be understated. This is evidenced by the fact that information technology’s (IT) typical role in a company has migrated from a basic service provider to directorships with a seat at the highest decision making table. This is directly due to the fact that IT assets have become the most valuable things many companies own. Guarding these assets and managing the inherent risk of their loss is the job of information security professionals.

Malicious software, also referred to as malware, is often employed to help an attacker gain access to a system. Many types of malicious software exist, but the common thread is that they perform actions that cause harm to a computer system or network. In the case of many attacks, system failure may occur either as an intended (as is the case in Denial of Service (DoS) attacks) or unintended consequence. This means the system will no longer be able to perform its intended purpose. System failure is a serious risk that needs to be managed.

1.2. Learning the Lingo

In general, the technical fields are laden with acronyms and obtuse vocabulary. Unfortunately security is no exception to this rule. Three of the most important acronyms you should be aware of to start are CIA, AAA, and DRY.

1.2.1. CIA

While the Central Intelligence Agency does have a role to play in information security, for our purposes CIA is an acronym used to remember the three foundational information security principles: confidentiality, integrity, and availability. These ideas form the cornerstone of security and should be ever-present in your thoughts.

Confidentiality refers to the practice of keeping secret information secret. For example, if an e-commerce site stores credit card numbers (a questionable practice to begin with) those credit card numbers should be kept confidential. You would not want other users of the site or outsiders to have access to your credit card number. Many steps could be taken to ensure the confidentiality of user credit card numbers, but at this point it is enough to understand that maintaining confidentiality is a principle of security.

Integrity is an assurance that data has not been corrupted or purposefully tampered with. As we discussed previously, data is very valuable, but how valuable is it if you can’t be sure it is intact and reliable? In security we strive to maintain integrity so that the system and even the controls we have in place to guard the system can be trusted. Imagine that e-commerce site again. What would happen if an attacker could arbitrarily change delivery addresses stored in the system? Packages could be routed to improper addresses and stolen and honest customers would not receive what they ordered, all because of an integrity violation.

Availability means that a system should remain up and running to ensure that valid users have access to the data when needed. In the simplest sense, you could ensure confidentiality and integrity by simply taking the system off line and not allowing any access. Such a system would be useless and this final principle addresses that. Systems are designed to be accessible and part of your security plan should be ensuring that they are. You will need to account for the costs of implementing confidentiality and integrity and make sure that the resources are available to keep the system working. In an extreme case, denial of service (DoS) attacks can actually target availability. By keeping this principle in mind, hopefully you can mitigate some of those risks.

1.2.2. AAA

Another acronym you’re going to encounter in many different contexts is AAA. It stands for Authentication, Authorization, and Accounting and it is used in designing and implementing protocols. These concepts should be remembered when designing security plans.

Authentication is the process of confirming someone’s identity. This may be done with user names and passwords or more frequently via multi-factor authentication (MFA) which requires not only something you know, but something you have (fingerprints, key fob, etc.).

Authorization refers to keeping track of which resources an entity has access to. This can be done via a permission scheme or access control list (ACL). Occasionally you will encounter something more exotic where authorization limits users to interactions during a particular time of day or from a particular IP address.

Accounting refers to tracking the usage of resources. This may be as simple as noting in a log file when a user has logged in to keeping track of exactly which services and user uses and how long they use them. Accounting is incredibly important because it allows you to not only monitor for possible problems, but also inspect what has occurred after a problem is encountered. Accounting also allows system administrators to show irrefutably what actions a user has taken. This can be very important evidence in a court of law.

1.2.3. DRY

While we’re dispensing knowledge in the form of three letter acronyms (TLAs), another important acronym to keep in mind is DRY: Don’t Repeat Yourself.

Say something once, why say it again?[1]

Psycho Killer

This is of course not entirely literal. Just because you’ve explained something to a coworker once does not mean that you shouldn’t explain it again. It is meant as more of a guide for how you make use of automation and how you design systems. Increasingly security experts are not being asked to analyze a single system, but a network of hundreds if not thousands of systems. In this case you should make use of scripts and tools to make sure you are not manually doing the same thing over and over. Have you found a good way of testing to see if a part of a system is secure? Put it in a script so that you can reuse the test on other systems. This same logic applies to how systems are designed. Do you have a database of user login info? Reuse that database across multiple systems. In short, "Don’t repeat yourself!"

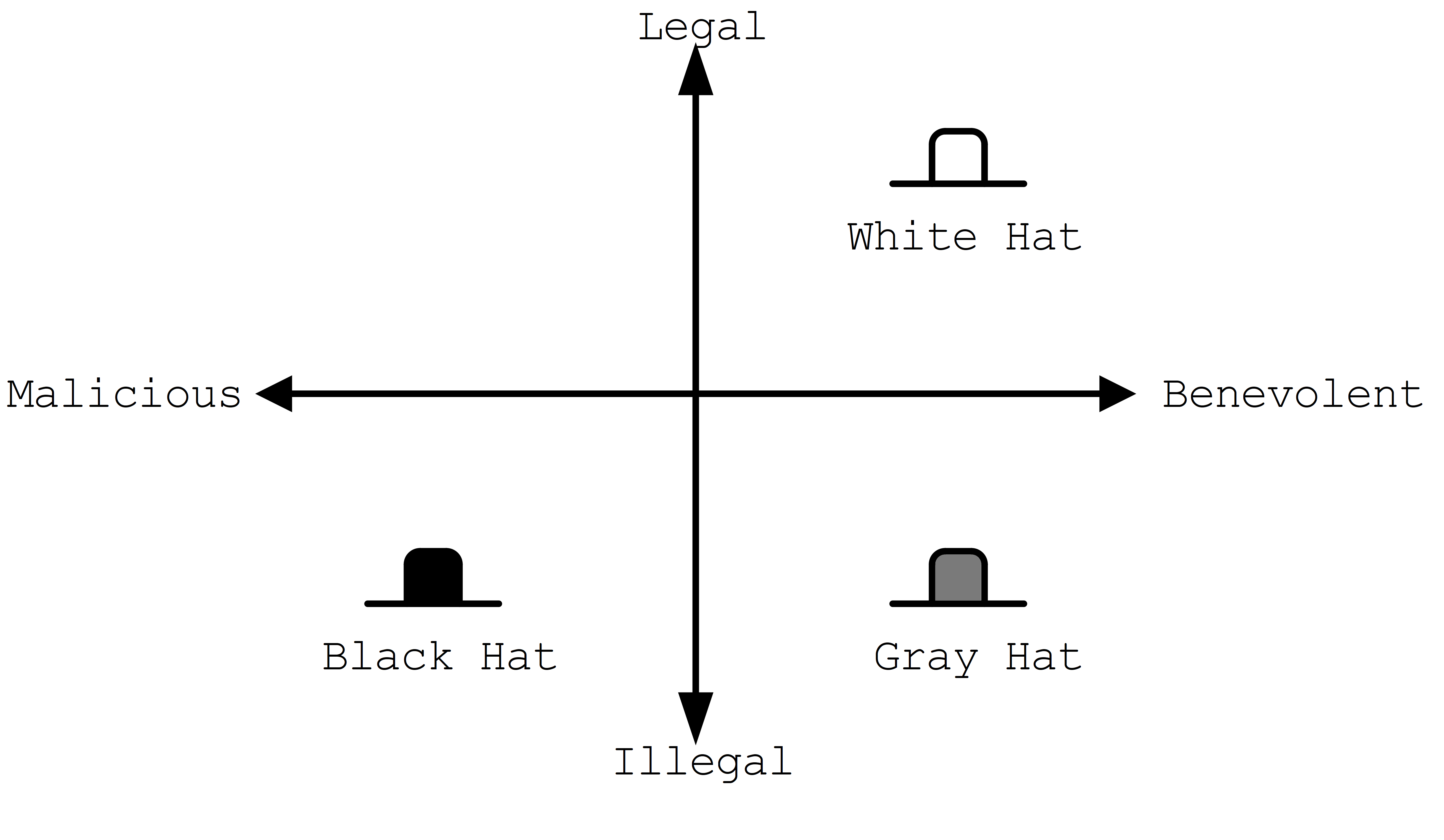

1.3. Hacker Culture

The term hacker comes from the sound that programmers would make when typing or hacking away at keyboards. Originally a hacker was anyone who worked feverishly at a problem on a computer and the term cracker was used to define people who attempted to bypass security systems. The distinction was eventually lost and a hacker has come to be the popular term for someone attempting to gain unauthorized access to data. For an interesting glimpse into early hacker culture/reasoning read The Conscience of a Hacker by The Mentor originally published in Phrack Magazine 1986.

1.4. Threat Actors

To better be able to manage the risks of a data breach, it helps to be able to identify/understand the attacker or threat actor involved. Just as there are many reasons an actor may attempt to gain unauthorized access there are also many groups of threat actors.

Neophytes making use of automated tools that they may not fully understand are often referred to a script kiddies. You may hear other pejorative names as well such as lamer, noob, or luser, but the common thread is that these threat actors are not highly sophisticated. The same techniques used for automating defensive security can also be applied to automating attacks. Unfortunately this means that you may encounter actors "punching above their weight" or using complex tools while having only a rudimentary understanding of what they do.

Hacktivist are threat actors that attack to further social or political ends. These groups can be very sophisticated. The most well known hacktivist group is Anonymous which has been linked to several politically motivated attacks.

Organized crime is another element which may employ or support threat actors typically to make money. These groups typically have access to more resources and contacts than a solo actor. It is important to note that threat actors with roots in organized crime may find it easier to migrate into other areas of crime due to their proximity to a large criminal enterprise. For example, while it may be difficult for a script kiddie to broker the sale of valuable data, a hacker working with an organized crime syndicate may have people close to them that are familiar with the sale of stolen goods.

The last group of threat actors, and arguably the group with the most resources, are threat actors working with or for governments and nation states. These groups may have the explicit or implicit permission of their country to commit cyber crimes targeting other nations. Given the constant threat and resources available to these groups, they are referred to as an advanced persistent threat (APT). By utilizing the resources of a nation (often including its intelligence and military resources) APTs are a severe threat.

1.5. Security Plans

While confronting such a diverse array of actors can seem daunting at first, the key element to being successful is having a plan. A security plan analyzes the risks, details the resources that need to be protected, and presents a clear path to protecting them. Typically a security plan utilizes the three types of security controls available: physical, administrative, and technical.

-

Physical controls are things like door locks, cameras, or even the way rooms in a building are laid out. These things can have a dramatic impact on the overall security and should not be overlooked!

-

Administrative controls include human resources policies (HR), classifying and limiting access to data, and separating duties. It helps to have a whole-organization understanding of security to make it easier to put these controls in place.

-

Technical controls are often what new security professionals think of first. These are things like intrusion detection systems (IDS), firewalls, anti-malware software, etc. While these are an important segment of security and they are the segment that falls almost entirely within the purview of IT, it is critical to remember that these are only as strong as the physical and administrative controls that support them!

| Physical controls definitely lack the cool factor that technical controls have. Movies typically show security professionals hunched over laptops typing frantically or scrolling rapidly through pages and pages of logs on a giant screen. Rarely do they show them filling out a purchase order (PO) to have a locksmith come in and re-key the locks to the data closet. Just because it isn’t cool doesn’t mean it isn’t important! Remember, once an attacker has physical access, anything is possible. |

1.6. Tools of the Trade

With all of this talk regarding how and why hackers attack systems, the question remains, "What can be done?" There are a few tools the security professional employs that are worth mentioning at this juncture including: user awareness, anti-malware software, backups, and encryption.

- User Awareness

-

A major risk, some would argue the biggest risk, is that unprepared users will run malware programs or perform other harmful actions as directed by actors looking to gain access. These actors may impersonate others or perform other social engineering tactics to cause users to do as they say. Probably the scariest statistic is the ease with which a massive attack requiring little effort can be performed. Threat actors do not even need to personally reach out to users, they could simply send a mass email. Through training programs and other methods of interaction a security professional can make users aware of these threats and train them to act accordingly. Raising user awareness is a critical component of any security plan.

- Anti-Malware Software

-

Given how prevalent the use of malware is a host of tools have been developed to prevent its usage. These tools may filter download requests to prevent downloading malware, monitor network traffic to detect active malware patterns, scan files for malware signatures, or harden operating system loopholes used by malware. A security plan will typically detail the type of anti-malware software being used as well as the intended purpose of its usage.

- Backups

-

Maintaining a copy of the data used by a system can be a quick solution to the problems of ransomware and other attacks aimed at causing or threatening system failure. While a backup does not solve the problem of the data being sold or used by others, it does allow for a quick recovery in many instances and should be part of a security plan.

- Encryption

-

At its most simple, encryption obfuscates data and requires a key to make it useful. Encryption can be employed to make copies of data obtained through unauthorized access useless to attackers that do not have the key. Often, encryption and backups complement each other and fill in the use cases that each lacks individually. As such, encryption will show up multiple times and in multiple ways in an average security plan.

1.7. Lab: Think Like a Hacker

For this lab, we will be engaging in a thought experiment. Imagine you are at a university that is having a student appreciation breakfast. At the entrance to the cafeteria an attendant has a clipboard with all of the student IDs listed. Students line up, show their ID, and their ID number is crossed off of the list.

Thinking like a hacker, how would you exploit this system to get extra free breakfasts? Feel free to think outside the box and make multiple plans depending on the circumstances you would encounter when go the breakfast.

Here is an example that you can not use:

|

Come up with at least five different ways of getting free breakfasts and map them to real-world information security attacks. If you are unfamiliar with any information security attacks, you may want to start by researching attacks and then mapping them to free breakfast ideas. |

1.8. Review Questions

-

In terms of information security, what does CIA stand for? What do each of the principles mean?

-

Why is it important to have a security plan? What types of controls can a security plan make use of? Give an example of each.

-

How do backups and encryped data compliment eachother? Explain.

2. Cryptography

This chapter is meant to serve as a brief and gentle introduction to the cryptographic concepts often encountered in the field of security. It is by no means exhaustive but it should provide a basis for a better understanding of why protocols are designed the way they are. Cryptography is a method of scrambling data into non-readable text. It allows us to transform data into a secure form so that unauthorized users cannot view it.

2.1. Why do we need cryptography?

Cryptography is used to set up secure channels of communication, but it can also be used to provide non-repudiation of actions, basically leaving digital footprints that show someone did something. This means that cryptography allows us to provide authentication, authorization, and accounting (AAA).

By using a secure and confidential encrypted channel we can be sure that anyone who intercepts our communications cannot "listen in." This helps prevents man-in-the-middle (MITM) attacks. Cryptography can also be used to provide integrity: proving that the data is valid. With cryptography you can provide a signature for the data showing that the person who claims to have sent it really did send it. Cryptography also allows for non-repudiation as it can show that only one person was capable of sending a particular message. Lastly cryptography also allows us to perform authentication without storing passwords in plaintext. This is critical in an age where data breaches are increasingly common.

2.2. Terminology

Going forward, it is important to address some common cryptography terms as they will be used frequently:

- Plaintext

-

unencrypted information, data that is "in clear", or cleartext

- Cipher

- Ciphertext

-

data that has undergone encryption

- Cryptographic algorithm

-

a series of steps to follow to encrypt or decrypt data

- Public key

-

information (typically a byte array) that can be used to encrypt data such that only the owner of the matching private key can unencrypt it

- Private (secret) key

-

information (typically a byte array) that can be used to decrypt data encrypted using the corresponding public key

One of the most basic examples of encryption is the Caesar cipher, or substitution cipher. It is easy to understand, compute, and trivial to crack. Let’s create a table that maps every letter in the alphabet to a different letter:

| A | B | C | D | E | F | G | H | I | J | K | L | M | N | O | P | Q | R | S | T | U | V | W | X | Y | Z |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

J |

G |

T |

Q |

X |

Y |

A |

U |

C |

R |

V |

I |

F |

H |

O |

K |

L |

E |

D |

B |

W |

S |

Z |

M |

N |

P |

Now creating a message is simple a matter of performing the substitutions.

For example, HELLO WORLD becomes UXIIO ZOEIQ.

While this is simple to understand and set up, it is also very easy to break. You could use a frequency attack, where you analyze a large chunk of encrypted text knowing that certain letters are more frequent than others. By matching up the most frequently used ciphertext letters with their standard English equivalents you may quickly reach a solution. You could also go through all permutations of the alphabet (4E26) and see what gives you the most English words. The second attack is made much more feasible through computing.

2.3. Keys

Typically a series of random bytes can be used as a key to either encrypt or decrypt data. A key is used by a cryptographic algorithm to change plaintext to ciphertext. Keys may also be asymmetric in that they can only be used to perform one of the operations (either encryption or decryption).

It is important to have an idea of what factors make a strong cryptographic key. Length plays an important role. The longer the key, the hard it is to crack the encryption. Likewise the randomness of the data in the key also makes it stronger. If the byte sequence is somehow predictable, the length is irrelevant. Finally we have the concept of a cryptoperiod or lifetime of a key. If we are working with a system that frequently changes keys an attacker may not have enough time to crack it.

2.4. Mathematical Foundation

Cryptography relies largely on the concept of one-way or trap door functions. That is a process that is hard to compute in one direction, but easy to compute in the other. For example it is much easier for a computer to multiply large numbers than to determine the factors of large numbers. This is the foundation of the RSA algorithm. A simplified version of the algorithm is shown below:

KEY GENERATION

p = a random prime number

q = a random prime number

N = p * q

r = (p - 1) * (q - 1)

K = a number which equals one when modded by r and can be factored

e = a factor of K that doesn't share factors with N

d = another factor of K that doesn't share factors with N

Your public key is N and e

Your private key is N and d

ENCRYPTION

ciphertext = (cleartext**e)%N

DECRYPTION

cleartext = (ciphertext**d)%N

EXAMPLE

p = 7

q = 13

N = 7 * 13 = 91

r = 72

K = 145 (because 145%72 = 1)

e = 5

d = 29

Public Key = 91, 5

Private Key = 91, 29

cleartext = 72 ('H' in ASCII)

ciphertext = (72**5)%91 = 11 (encrypted using N and e)

cleartext = (11**29)%91 = 72 (decrypted using N and d)In order to crack RSA you would need to be able to factor N into its two prime numbers. While it is trivial in our simple example, imagine how difficult it would be to factor a number with 1400 decimal digits, the current recommended keysize for RSA. You’ll notice that the algorithm only requires exponentiation, multiplication, and modulus arithmetic. At no point do you ever have to factor a large prime number to generate keys, encrypt, or decrypt. You only have to perform that operation if you’re trying to work backwards without the keys.

Other similar one-way function exist based on elliptical curves. It turns out that motion along an elliptical curve can be described according to a start and end point and several iterations of a simple algorithm. You can reconstruct the initial conditions if you know the start point, end point, and how many iterations it took. If all you know is the start and end point you can’t determine the initial conditions.

2.5. Hashes

A hashing algorithm is a one-way function that creates hashed text from plaintext. It is often used for data validation as a relatively small hash digest or signature can demonstrate the integrity of a large block of data. Hashes can also be used so that sensitive information does not have to be stored in cleartext. By storing a hash of a password, you can check to see if the correct password was entered without storing the password itself. In the case of a data breach only the hashes are leaked and the attacker does not have access to the passwords to try with other services.

Two main families of hash algorithms are used: MD5 and SHA. MD5 produces a 128-bit hash value and is still often used to verify data integrity. The algorithm is technically cryptographically broken, but you may still see it in use. The SHA family of algorithms consists of SHA-1, SHA-2, and SHA-3:

-

SHA-1: 160 bits, similar to MD5, designed by the NSA, no longer approved for cryptographic use

-

SHA-2: SHA-256 and SHA-512, very common with the number indicating the block size, designed by the NSA

-

SHA-3: non-NSA designed, not widely adopted, similar numbering scheme as SHA-2 (SHA3-256, etc.)

Dictionary based attacks against password hashes are fairly common. Typically software is used which generates a hash for every word in the dictionary and then compares that hash to what is stored on the compromised machine. One way to combat this is through salting or adding random bits to each password. When salting the bits are stored with the hash. This forces a dictionary based attack to actively generate the hashes based on what the salt is as opposed to using a stored table (rainbow table) of all the possible hashes. It can make attacks go from instant to days or even years depending on the complexity of the password.

An even better way of combating attacks against hashes is through a secret salt or pepper. A pepper is a random value that is added to the password but not stored with the resulting hash. The random value can be stored in a separate medium such as a hardware Security Module.

2.6. Symmetric Encryption

Symmetric encryption is probably the simplest encryption to understand in that it only uses a single key (in this case our key is labelled 'A') to encrypt or decrypt data. Both parties need to know the private key in order to communicate. It does pose a security risk in that if the channel used for key exchange is insecure, all of the messages can be decrypted. That being said, given that it is simpler than many other forms of encryption, it is often used for secure communication or storage.

One-time-pad (OTP) is a rare example of a pen and paper, symmetric encryption scheme that cannot be cracked. The difficulty in OTP mirrors the difficulty with all symmetric encryption, namely that pre-shared keys need to be exchanged at some point.

Imagine that a prisoner wishes to send encrypted messages to someone outside the prison. To do so, they will make use of a copy of Harry Potter and the Sorcerer’s Stone that they have in their cell. The message they want to send is "DIG UP THE GOLD". They turn to "Chapter One: The Boy Who Lived" and look up the first twelve letters in the chapter: MR AND MRS DURS. For each letter of their message, they convert it to its number in the alphabet: 4 9 7 21 16 20 8 5 7 15 12 4 (DIG UP THE GOLD). They do the same for the key they looked up in their book: 13 18 1 14 4 13 18 19 4 21 18 19 (MR AND MRS DURS). Finally they add the two numbers to get their ciphertext: 17 27 8 35 20 33 26 24 11 36 30 23.

If the prisoner sends that ciphertext to someone on the outside who knows that they key is the first chapter of Harry Potter and the Sorcerer’s Stone, they will be able to subtract the key from each of the numbers in the ciphertext and discover the plaintext message. While theoretically unbreakable, anybody else who has the key can recover the text as well. This means that using common keys like popular books make it trivial for a man-in-the-middle to decode the ciphertext. After all, the warden probably knows every book that the prisoner has in their cell.

OTP has been used by spy agencies, often for communications between individuals via dead-drops. In this situation tables of random characters printed in duplicate are exchanged as the key.

2.7. Asymmetric Encryption

An asymmetric encryption algorithm has actually already been demonstrated in the Mathematical Foundation section. Asymmetric encryption has a public key which can be published anywhere and used to encrypt messages that only the holder of the private key, which is not published, can unencrypt. For example if you want to receive encrypted emails you may make your GNU Privacy Guard (GPG) public key available a public key server. This would allow anyone to look up your public key, encrypt a message that only you can read, and send you the ciphertext. Asymmetric encryption gets around the difficulties of key exchange via an untrusted channel (like email). Unfortunately the cost of such a useful system is that asymmetric algorithms tend to be much slower that their symmetric counterparts.

2.8. Stream Ciphers

Stream ciphers encode data one symbol at a time and produces one ciphertext symbol for each cleartext symbol. Given that you can often use some sort of block encryption with a significantly small block size, stream encryption is not used as often. Technically the OTP example, when used one symbol at a time, is a stream cipher. The keys come in one symbol at a time, the cleartext comes in one symbol at a time, and an operation is performed (addition in the case of the example) to create the ciphertext. Given a suitable keysize and a well-researched algorithm, stream ciphers can be just as secure as block ciphers. That being said a stream cipher is usually more consistent in its runtime characteristics and typically consumes less memory Unfortunately there are not as many well-researched algorithms and widely used stream ciphers.

2.9. Block Ciphers

Block ciphers takes the data in, in blocks and use cipher blocks of the same size to perform the encryption. It is very popular and there are many secure algorithms to choose from. Unfortunately if the input data doesn’t fit neatly into blocks of the same size, padding may be required, which takes up more space/memory and reduces the speed of the cipher. As such the block encryption is often less performant than stream encryption.

2.9.1. Block Cipher Modes of Operation

There are several ways you can create your cipher blocks and depending on how you do it, various attacks are possible:

Electronic Codebook (ECB)

The simplest mode of operation, data is divided into blocks and each block is encoded using a key. Since the blocks are encoded the same way, identical blocks will give identical ciphertexts. This makes it easier, given enough data, to determine what the key is.

Cipher block chaining (CBC)

Starting with an initialization vector (IV) each block is XORed with part of the ciphertext of the previous block to create a chain of ciphertext that is constantly changing. This means that identical blocks will result in different ciphertexts. This is the most common mode of operation, its weaknesses being that the algorithm cannot be run in parallel (sorry modern processors) and that the IV is a common attack target.

Counter (CTR)

Instead of using an IV, CTR uses a nonce (random number that is the same for all blocks) and counter. The counter is incremented with each block, meaning this mode can function in parallel. CTR mode solves the problems of ECB while still providing an algorithm that can run quickly on modern machines.

Galois/Counter Mode (GCM)

GCM uses a counter like CTR, but does not make use of a nonce. Instead an IV is used with the inititial counter. GCM also generates a message authentication code (MAC) for each block to verify the integrity of the block. This combination makes for a modern, robust algorithm that is gaining rapid adoption.

2.10. Encryption Examples

2.10.1. RSA

RSA is an asymmetric encryption standard developed in 1977 that is still very popular. Its trapdoor function is based on the difficulty of factoring large numbers. The name RSA comes from the names of the authors of the system: Ron Rivest, Adi Shamir, and Leonard Adleman.

2.10.2. Advanced Encryption Standard (AES)

AES is a symmetric block cipher developed in 1998 to supersede the less secure Data Encryption Standard (DES). AES works on 128 bit blocks of data, performing multiple rounds of substitution-permutation to encrypt data. You will find AES used to encrypt network traffic (as is the case in a virtual private network), data stored to disk (disk encryption), or computer game data that is saved to storage. AES is a very common cipher.

2.10.3. Elliptic-curve Cryptography (ECC)

ECC is an asymmetric encryption scheme that is quite fast and easy to computer. It is rapidly becoming the go to choice for digital signatures and key exchanges, gaining adopting starting in 2004. ECC is based on the geometry of a pre-determined set of curves (some examples can be found here), which can be used to create a trapdoor function.

2.10.4. Diffie-Hellman Key Exchange

Given the slow nature of asymmetric algorithms, often an application such as a VPN will choose to use asymmetric cryptography to exchange a shared secret key and then use that secret key with a faster symmetric algorithm such as AES. Diffie-Hellman does exactly that and was first published in 1976. Diffie-Hellman key exchange uses the same mathematical concepts as RSA, exponentiation and modulus arithmetic, to great effect, but to visualize what is happening a metaphor of secret color mixing is used (see the included diagram). It is important to remember that because the medium of exchange may be slow a DH key exchange is designed to generate minimal traffic.

2.10.5. Digital Certificates

A digital certificate is a set of credentials used to identify a company or an individual. Since asymmetric encryption requires know a party’s public key, a digital certificate includes that key as well as an ID of the owner. The question then becomes how do you trust that the public key is actually for the alleged owner? That’s where the issuing authority comes in. A certificate authority (CA) signs the certificate indicating that the ID and public_key are correct. Certificates can be self-signed, but this sidesteps the trust placed in the CA and is often only used in testing. Since most certificates are used for encrypting web traffic, Web browsers will typically warn you if a site is using a self-signed certificate.

Given how how many certificates need to be issued and the work that needs to be done to verify them, most certs are not issues by root CAs, but are actually issued by intermediate CAs. Root CAs delegate the work to Intermediate CAs and indicate their trust in them by signing the intermediate CAs keys. This creates a chain of trust from the issued certificate (signed by the Intermediate CA) to the Intermediate CA (signed by the root CA) to the root CA (trusted by the browser). Tools that use this chain of trust will keep the root CA certificates and update them from the companies that issue them as needed.

The certificate store is very important and while users rarely interact with it is often possible to install root CAs manually. This is can be used to create a proxy that can decrypt HTTPS traffic for debugging or for more nefarious purposes. For this reason some applications, Facebook mobiles apps for example, maintain their own certificate store and prevent users from adding root CAs to it.

So how do you get a certificate for your website? The customer will generate a Certificate Signing Request (CSR) that includes the public key and their ID. The CA will validate that the customer owns the website and build and sign the cert. This whole process can be automated and performed for free via a tool called Let’s Encrypt.

2.10.6. Blockchain

It is hard to talk about cryptography without addressing blockchains, one of the concepts behind cryptocurrencies. A blockchain is a shared ledger (of transactions in the case of BitCoin) where blocks are constantly being added to add to the information being stored. Periodically an new block is created, which includes a hash of the previous block and a hash of itself for the next block to reference. By examining these hashes, you can prove the integrity each block and its position, thus making a publicly-available, mutually agreed upon accounting of what has occurred on the network. Typically to prevent bad actors from adding block some sort of proof of work, a mathematically difficult operation, or proof of stake, an accounting of investment in the network, must be included when adding a block to the chain.

2.10.7. Trusted Platform Module (TPM) / Hardware Security Module (HSM)

These modules provide hardware specifically for use with encryption. HSMs are removable modules while TPMs are motherboard chips. Many ciphers rely on a reliable source of entropy (randomness) which these modules provide. They can also significantly increase the speed at which cryptographic algorithms run by moving the operations to specialized hardware. Lastly, these modules can be used to store keys and make them only accessible via the module. This can add an extra layer of security to prevent the keys from being easily copied.

2.10.8. Steganography

Steganography is the process of hiding data in something such that to a casual observer it cannot be detected. Data can be hidden in audio, images, or even plain text!. The hidden data can also be encrypted if an additional layer of security is required. In the field of security, malicious code may be hidden inside other files using steganographic techniques. This makes it more difficult for tools to find them when searching storage.

2.11. Lab: Hash it Out

A hash is a one-way cryptographic function that produces a unique set of characters for a given message. In a perfect world, given a hash you should not be able to determine what the original message was, but given a hash and the original message you can check that the hash matches the message. Before we dive into the uses of a hash, lets try to further understand it by looking at a simple and consequently poor hashing algorithm.[3]

Anagram Hash

Let’s assume we wanted to hash the message "Hello from Karl" so that we can have a string of characters the uniquely represent that phrase. One way to do it would be to strip all the punctuation in the message, make everything lowercase, and then arrange all the letters alphabetically. "Hello from Karl" becomes "aefhklllmoorr". You can think of it like saying, "There is one 'a' in the message, one 'e' in the message, one 'f' in the message', one 'k' in the message, three 'l’s in the message…" Now our hash, "aefhklllmoorr", can be used to uniquely identify the phrase.

Now assume Karl wants to send us a message but he can’t trust the person sending the message. He could use the untrusted party to send us the message and then put the hash someplace public like on a website. We could use the hash to know the message came from Karl and if anyone else got the hash they would not be able to discern the message because a hash is a one-way function. "aefhklllmoorr" reveals very little about the message, but it can be used to check its accuracy.

Hopefully this is beginning to show the power of hashes. Now lets examine another very common usecase and find out exactly why this is a terrible algorithm.

Assume you run a website where a user uses a password to log in. You want to make sure users are using their password when they log in, but you do not want to store the password on your website. This is quite common. If you website was breached you don’t want to leak a bunch of people’s passwords. What do you do? What you could do is store a hash of their password, hash the password when they try to login, and compare the hashes. For example if our password was "password" using our basic hash algorithm the hash would be "adoprssw". We could store "adoprssw" in our database, use it for comparison during login, and if someone were to ever steal the data in our database they wouldn’t know that the original password is "password". This may prevent an attacker from exploiting the fact that many people use the same password on multiple sites.

The problem is that there are many things that hash to "adoprssw" including "wordpass", "drowsaps", or even the hash we’re storing: "adoprssw". When multiple messages have the same hash it is referred to as a collision and this particular algorithm is useless because it generates so many of them.

|

What would the anagram hash of "AlwaysDancing" be? |

Now that we understand what hashes do and to some extant how they are possible, lets look at a much more useful hash function.

MD5

For this section, we are going to be using Docker and a terminal. Please follow these directions for installing Docker. For Windows you can use the Windows Terminal app and in MacOS you can use the preinstalled Terminal app. Gray boxes show the commands as typed into the terminal with typical output where possible. Your prompt (the part shown before the command) may differ depending on your OS.

Start by running a BASH shell on a custom Linux container:

ryan@R90VJ3MK:/windir/c/Users/rxt1077/it230/docs$ docker run -it ryantolboom/hash (1)

root@8e0962021f85:/(2)| 1 | Here we are using the Docker run command interactively (-it) as this container runs bash by default |

| 2 | Notice the new prompt showing that we are root on this container |

MD5 is a message-digest algorithm that produces significantly better hashes than our Anagram algorithm.

Most Linux distributions include a simple utility for creating an MD5 hash based on a file’s contents.

This command is named md5sum.

Typically this is used to detect if a file has been tampered with.

A website may provide links to download software as well as an MD5 hash of the files so that you know what you’ve downloaded is correct.

Similarly a security system may keep md5sums (MD5 hashes) of certain critical files to determine if they have been tampered with by malware.

Let’s practice taking the md5sum of the /etc/passwd file:

root@8e0962021f85:/# md5sum /etc/passwd

9911b793a6ca29ad14ab9cb40671c5d7 /etc/passwd (1)| 1 | The first part of this line is the MD5 hash, the second part is the file name |

Now we’ll make a file with your first name in it and store it in /tmp/name.txt:

root@8e0962021f85:/# echo "<your_name>" >> /tmp/name.txt (1)| 1 | Substitute your actual first name for <your_name> |

|

What is the md5sum of |

For our final activity, lets take a look at some of the weaknesses of hashes.

Hash Cracking

Passwords in a Linux system are hashed and stored in the /etc/shadow file.

Let’s print out the contents of that file to see how it looks:

root@7f978dd90746:/# cat /etc/shadow

root:*:19219:0:99999:7:::

daemon:*:19219:0:99999:7:::

bin:*:19219:0:99999:7:::

sys:*:19219:0:99999:7:::

sync:*:19219:0:99999:7:::

games:*:19219:0:99999:7:::

man:*:19219:0:99999:7:::

lp:*:19219:0:99999:7:::

mail:*:19219:0:99999:7:::

news:*:19219:0:99999:7:::

uucp:*:19219:0:99999:7:::

proxy:*:19219:0:99999:7:::

www-data:*:19219:0:99999:7:::

backup:*:19219:0:99999:7:::

list:*:19219:0:99999:7:::

irc:*:19219:0:99999:7:::

gnats:*:19219:0:99999:7:::

nobody:*:19219:0:99999:7:::

_apt:*:19219:0:99999:7:::

karl:$y$j9T$oR2ZofMTuH3dpEGbw6c/y.$TwfvHgCl4sIp0b28YTepJ3YVvl/3UyWKeLCmDV1tAd9:19255:0:99999:7::: (1)| 1 | As you can see here the karl user has a long hash immediately after their username |

One of the problems with hashes are that if people choose simple passwords, they can be easily cracked by a program that takes a wordlist of common passwords, generates their hashes, and then checks to see if the hash is the same. While a hash may be a one-way function, it is still subject to this type of attack. We’re use a program called John the Ripper and do exactly that.

John the Ripper is already installed on this container along with a simple wordlist.

We will tell it to use the default wordlist to try and determine what the password is that matches karl’s hash in /etc/shadow:

root@8e0962021f85:/# john --format=crypt --wordlist=/usr/share/john/password.lst /etc/shadow

Loaded 1 password hash (crypt, generic crypt(3) [?/64])

Press 'q' or Ctrl-C to abort, almost any other key for status

<karl's password> (karl)

1g 0:00:00:01 100% 0.6211g/s 178.8p/s 178.8c/s 178.8C/s lacrosse..pumpkin

Use the "--show" option to display all of the cracked passwords reliably

Session completed

Once john has cracked a password it will not show it if you run it again.

To show the passwords that have already been cracked you must run the --show command with the file: john --show /etc/shadow

|

Given that the password is in the included common password wordlist, /usr/share/john/password.lst, you will quickly find that John the Ripper figures out that karl’s password.

John the Ripper can also run incrementally though all the possible character combinations, but it takes much longer.

To help make these types of attacks more difficult, every hash in /etc/shadow is built off of a random number.

This number is called a salt and is stored with the hash.

This means that instead of just trying one hash for each word in the wordlist, the hash cracker must try every possible salt for every word in the wordlist, slowing things down significantly.

Modern hash crackers may use rainbow tables so that all of the possible hashes have already been computed.

These tables may take up terabytes of disk space, but can make cracking even complicated hashes much simpler.

Let’s use a custom utility named `crypt to show that we have the actual password.

This utility is already installed on your container.

We will start by printing out just the line in /etc/shadow that has karl’s info.

We will use the grep command to limit out output to things that have karl in them:

root@7f978dd90746:/# cat /etc/shadow | grep karl

karl:$y$j9T$oR2ZofMTuH3dpEGbw6c/y.$TwfvHgCl4sIp0b28YTepJ3YVvl/3UyWKeLCmDV1tAd9:19255:0:99999:7:::The first part of the shadow line is the username, karl.

The next part of the shadown line, immediately following the first colon, is the hash information.

The characters in between the first set of $ is the version of the hashing algorithm being used, y for yescrypt in our case.

The characters in between the second set of $ are the parameters passed to yescrypt which will always be j9T for us.

The characters in between the third set of $ is your salt.

Finally the characters in between the fourth set of $ is the hash.

The [crypt] utility calls the system crypt command and prints the output.

Let’s run this utility with the password we’ve cracked and the first three parts of the hash information from /etc/shadow.

If everything goes well, you should see hash output that matches what is in /etc/shadow:

root@7f978dd90746:/# crypt <karl's password> '$y$j9T$oR2ZofMTuH3dpEGbw6c/y.' (1)

$y$j9T$oR2ZofMTuH3dpEGbw6c/y.$TwfvHgCl4sIp0b28YTepJ3YVvl/3UyWKeLCmDV1tAd9| 1 | Don’t forget to use the actual password you cracked and put the hash info in single quotes |

|

Submit a screenshot with your lab showing that the output of the |

2.12. Review Questions

-

What is the difference between symmetric and asymmetric encryption? Give one common use case for each.

-

What is a hash and what is it used for? How are hashes used in a blockchain?

-

What is the difference between a stream cipher and a block cipher? Give one common use case for each.

3. Malware

3.1. What is malware?

Malware is a portmanteau of the words malicious and software. The term is used to describe many different types of intentionally malicious programs. One of the key differences between malware and just plain bad software is the intentional aspect of its creation. Malware is designed to damage or exploits computer systems. It often spies on, spams, or otherwise damages target or host machines.

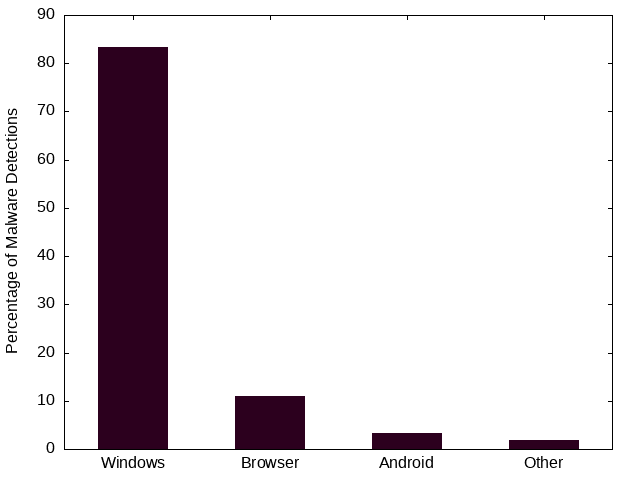

3.2. Malware Targets

The most popular target for malware is the Windows OS by quite a large margin. This is due largely to its popularity as a desktop operating system. The second largest target is web browsers, which afford malware a unique cross-platform reach. The third largest target is the Android mobile operating system, which while technically Linux runs mostly on mobile phones. Both Linux and Mac do not receive as much malware attention. While this may be partially due to the open-source nature of Linux and the BSD kernel used by Macs, it is also partially due to the lack of popularity of each of these operating systems. Malware is often widely distributed, meaning it can target only the most popular/possibly weakest links and still be successful.

3.3. Types of Malware

The definition of malware is so broad and new malware is being created daily. This can make it difficult to classify malware. As we go through some basic types, please keep in mind that there is significant overlap. For example, you may encounter ransomware distributed as a virus or ransomware distributed as a trojan. The fact that it is ransomware does not preclude it from being some other type of malware as well.

3.3.1. Worms, Viruses, and Trojans

Worms are self-propagating programs that spread without user interaction. Their code is typically stored within an independent object, such as a hidden executable file. Worms often do not severely damage their host, as they are concerned with rapid, exponential spreading.

Stuxnet was a 2010 worm that specifically targeted Iranian nuclear facilities. The worm used an unprecedented four zero-day attacks and was designed to spread via USB flash drives and Remote Procedure Calls (RPCs). In this way it didn’t just rely on networks to propagate. Ultimately Stuxnet’s payload targeted the code used to program PLC devices that control motors and make them spin too fast, destroying the centrifuges. Stuxnet also employed an impressive rootkit to cover its tracks. Given the level of sophistication Stuxnet is believed to have been developed by the US and Israel.

Viruses typically require user interaction, such as copying and infected file from one machine to another, and store their code inside another file on a machine. An executable file may be infected by having the virus code added a separate page that executes before the standard program code. Viruses can be quite damaging to the host as they may take significant resources to spread locally. The term virus is also an unfortunately overloaded one. Due to it’s popularity it is often used by some lower-skill threat actors to refer to many different types of malware.

The Concept virus was the first example of a Microsoft Word macro virus. The virus hid itself inside Microsoft Word files and used Word’s embedded macro language to perform its replication tasks. Viruses were later created for Excel and other programs that had sufficiently sophisticated yet ultimately insecure internal scripting languages.

A trojan is a form of malware that disguises itself as legitimate software. It does not have to rely on a software exploit as much as it exploits users into installing, running, or giving extra privileges to the malicious code. Trojans are the most popular kind of malware as they can be used as an attack vector for many other payloads. The name comes from Greek mythology, where a Trojan horse was disguised as a gift and given to a besieged town. Within the large horse were secret troops who came out in the middle of the night and opened the town gates.

Emotet is a banking trojan from 2014 that spread through emails. It made use of malicious links or macro-enabled documents to make the user download its code. Emotet has been one of the most costly and destructive pieces of malware currently averaging about one million in incident remediation. It continues to be adapted to avoid detection and make use of even more sophisticated malware.

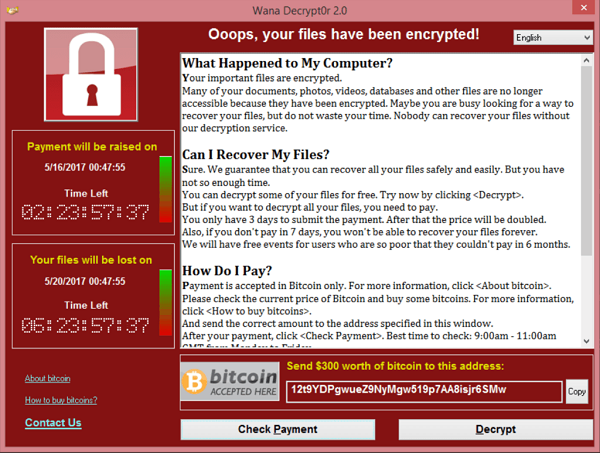

3.3.2. Ransomware

Ransomware is a type of malware that encrypts files and demands a ransom to decrypt them. Modern ransomware uses symmetric encryption to the files quickly and then encrypts the symmetric key asymmetrically using a hard-coded public key for which the threat actor has the corresponding private key. When the ransom is paid, typically via cryptocurrency, the threat actor can decrypt the symmetric key using their private key and the user can use the symmetric key to decrypt the files.

Ransomware is considered a data breach in the data is often exfiltrated as well. It is also worth noting that when the ransom is paid, there is no guarantee that the threat actor will actually begin the decryption process. Typical targets of ransomware include corporate infrastructure and health care systems although ransomware may also be spread indeterminately. The payout of ransoms can be a large money-making enterprise so many APTs or criminal groups may employ its use. Ransomware is considered the biggest threat to cyber stability today.

3.3.3. Spyware

Malware specifically designed for espionage/data theft is known as spyware. Like ransomware, spyware can also have a monetary payoff for the threat actor. Actors may use extortion to demand payment or the data will be leaked. This typically means either sold on the dark web or publicly posted. Once again, given the possibility of monetary gain, spyware is often associated with criminal groups. APTs may use spyware as well to obtain secrets of national importance.

Customer data, trade secrets, proprietary data, and government secrets are all targets of spyware. Even outside of governments systems, in the corporate setting, spyware is still a major threat.

3.3.4. Cryptojacking

Crypto currencies utilizing proof-of-work algorithms have made it easier than ever for programs to convert processor cycles into money. Certain types of malware capitalize on this by mining cryptocurrency in the background on a users machine. This theft of power and resources can result income for the malware distributor when the funds from mining are deposited into their online wallet.

Cryptojacking is more popular than ever, especially considering that large botnets of infected machines have already been created. Cryptojacking creates a simpler path to monetization for malicious actors who may already have control of many compromised machines.

3.3.5. Rootkit

A rootkit is a secret program designed to give back door access to a system. They are designed to remain hidden and may even actively disable or circumvent security software. Due to their low-level nature, many rootkits can be difficult to detect and even more difficult to remove.

Rootkits are often classified in accordance with the layer in which they are hidden:

- Firmware Rootkit

-

Firmware is code that a hardware device uses to run. It is often a thin layer of commands used for setting up and interfacing with the device. A firmware rootkit may reside in the BIOS of a motherboard and can be very difficult to remove.

- Bootloader Rootkit

-

A bootloader prepares the system to boot an operating system kernel, typically by loading the kernel into memory. A bootloader rootkit may hijack this process to load itself into separate memory space or manipulate the kernel being loaded.

- Kernel-mode Rootkit

-

Many operating system kernel, including Linux, have the ability to load dynamic modules. These kernel modules have complete access to OS kernel operations. A kernel-mode rootkit can be difficult to detect live as the OS kernel being given the instructions to detect the rootkit can no longer be trusted.

- Application Rootkit

-

An application or user-mode rootkit is usually installed as an application that runs in the background with administrative privileges. These rootkits are typically the easiest to develop and deploy, a low-level knowledge of the hardware the system is using is not required, but they are also the easiest to detect and remove.

3.3.6. Botnet

A botnet is a network of exploited hosts controlled by a single party. These hosts may be desktop computers, servers, or even internet of things (IoT) devices. Botnets are often used in large-scale distributed denial of service (DDoS) attacks where the nature of the attack is to have many machines flooding a single machine with traffic. Botnets may also be used to send spam emails as their access to SMTP email relay may vary depending on their internet service provider (ISP).

Bots are typically controlled through a command and control (C2, C&C) server. While this C2 server may use a custom protocol, it is far more typical for modern botnets to rely on other infrastructure. C2 traffic can use SSH, HTTP, Internet Relay Chat (IRC), or even Discord to send commands to bots and receive data from bots.

3.3.7. RAT

RAT stands for Remote Access Trojan an it is used to gain full access and control of a remote target. The malware distributor can browse the files on a computer, send keystrokes and mouse movements, view the screen, and/or monitor the input from the microphone and camera. RATs often actively bypass security controls and as such they may be difficult to detect.

3.3.8. Adware / Potentially Unwanted Programs (PUP)

Adware is malware that is designed to track user behavior and deliver unwanted, sometimes intrusive, tailored ads. Adware may slow down a system and/or add ad walls to sites. This type of malware often targets a users web browser.

Potentially Unwanted Programs (PUP) are typically downloaded as part of the install of another program. Commons PUPs are browser toolbars, PDF readers, compression utilities, or browser extensions. These programs may have adware/spyware components in them and can also slow down a system.

3.4. Indicators of Compromise

An indicator of compromise (IoC) is an artifact with high confidence the indicates an intrusion. It is a way to tell if a machine has been a victim of malware. IoCs are publicly communicated by security professionals in an effort to help mitigate the effects of malware.

- Hash

-

A hash of files that are known to be malicious. This can help in identifying trojans and worms.

- IP addresses

-

Tracking the IP addresses which malware connects to can be used to determine if a machine is infected.

- URLs/Domains

-

Tracking the URLs or domains that malware uses can also be used to determine if a machine is infected.

- Virus definition/signature

-

Executables and other files can be scanned for specific sequences of bytes which are unique to a particular virus. In this way even if the malware is hiding within another file, it can still be detected.

3.5. Delivery of Malware

Malware is often delivered through social engineering, namely convincing an actor within an organization to download and run or click on something. It can also delivered through infiltrating the software packages something depends on, supply chain, or possibly through a software exploit on an publicly exposed service. Some of the most common ways of spreading malware are detailed below.

3.5.1. Phishing

Phishing involves communicating with someone via a fraudulent message in an effort to make them perform and action that will harm them. It is broken into five main categories:

- Spear phishing

-

Sending phishing emails or other communications that are targeted towards a particular business or environment. These messages may include information about the inner workings of the organization in an attempt to prove their validity. They may also take advantage of a known, insecure practice at a particular organization. Spear phishing is not your standard wide-net phishing attempt, but more of a focused, tailored, custom campaign.

- Whaling

-

Targeting high-ranking individuals at an organization. Whaling is often used in conjunction with spear phishing.

- Smishing

-

Using SMS messages when phishing.

- Vishing

-

Using voice messages when phishing.

- Phishing sites

-

Threat actors can attempt to gain unauthorized access through information obtained from non-business related communication channel. For example, malicious actors may know that the CEO frequents a popular sailing forum. These actors could set up an account on the sailing forum to direct message the CEO for information.

3.5.2. SPAM

SPAM consists of large quantities of unsolicited emails. These emails may be malicious or they may simply be advertising. In either case SPAM accounts for nearly 85% of all email. It is interesting to note that sometimes the malware distributed through SPAM is actually used to send more SPAM through a victim’s machine. The war on SPAM is constantly evolving and while many updates have been made to the way we send email, many improvements have yet to be realized.

3.5.3. Dumpster Diving

Information that can ultimately lead to the spread of malware can also be found in improperly disposed trash. Old records or hard drives may contain corporate secrets or credentials that give someone unauthorized access. It is important to properly dispose of sensitive information, making sure that all things that need to be destroyed are destroyed in a complete manner.

3.5.4. Shoulder Surfing

PINs, passwords, and other data can also recovered simply by looking over someone’s shoulder. These credentials could be the "in" that an attacker needs to spread malware. Through the aid of optics, such a binoculars, shoulder surfing can even occur at a long distance. Privacy screens, which limit the angle at which you can see a monitor, can be helpful in mitigating this type of attack.

3.5.5. Tailgating

Following behind someone who is entering a secure location with a credential is known as tailgating. Often people will even hold secure doors open for someone if they have their hands full. It is human nature to want to help people, but you also must remember that the person behind you may have a USB key with malware ready to deploy as soon as they gain physical access to a machine in the building.

3.5.6. Impersonation/Identity Theft

Often as part of a phishing campaign, a threat actor will pretend to be someone else. This may be someone within the organization or someone with sufficient power outside the organization, such as a representative of a government oversight agency. Attackers may also use stolen credentials to make their messages appear official, once again giving them and easy route through which to deploy malware.

3.6. Cyber Killchain

One way of analyzing an attack involving malware is through the steps of the Cyber Killchain. The Cyber Killchain was developed by Lockheed Martin and is a military method of analysis that has been adopted by cybersecurity. Cyber Killchain is broken into seven steps: Recon, Weaponization, Delivery, Exploitation, Installation, Command and Control, and Exfiltration.

3.6.1. Recon

Recon is short for reconnaissance, military parlance for a preliminary survey used to gain information. During the recon phase, a malicious actor will gather as much information as possible. Methods used in this phase may be passive or active.

Passive recon involves gathering information without sending anything to the target. This typically involves accessing publicly available information, such as social media, published websites, and DNS records. If the actor has access they may also passively sniff network packets.

Active recon involves interaction with the target. This can include port scanning, vulnerability scanning, brute forcing directories and filenames on an HTTP server, or even contacting workers. Active recon can yield more information, but it is also significantly easier to detect.

3.6.2. Weaponization

In the weaponization phase the actor begins readying exploits for the vulnerabilities that were assessed during recon. This may include tailoring malware, creating phishing emails, customizing tools, and preparing an environment for the attack. For malware to be effective it must utilize the correct exploits and work under the correct OS and environment. Metasploit is a penetration testing framework that is often used in this step to create custom malware.

3.6.3. Delivery

During the delivery phase the malware is handed over to the target. Typically steps are taken to bypass detection systems. Delivery may involve the sending of emails linked to malware or the exploitation of vulnerable servers to then run malware. At the end of this phase, an attacker typically waits for a callback from the malware via the command and control channel.

3.6.4. Exploitation

Technically the exploitation step occurs once the malware is successfully executed. In many cases, this involves almost no interaction from the attacker. Once malware is activated or the payload of an exploit executed, the victim has completed the exploitation step.

3.6.5. Installation

The installation step is typically performed by the malware once it is running. The malware installs itself, hides itself, and sets up persistence (the ability to restart after being stopped). The malware may escalate privilege or move laterally. It may also install second stage additional payloads from a remote server. A common tactic is injecting downloaded code into an existing process to mask which process is performing questionable actions.

3.6.6. Command and Control (C2, C&C)

Malware will reach out via its Command and Control channel for more instructions. At this point an attacker may interact with the malware, giving it additional commands. C2 traffic is usually designed to blend in with existing traffic and not draw attention.

3.6.7. Exfiltration / Actions & Objectives

The final step involves getting data from the exploited systems or disabling/misusing the systems in another way. At this point an attacker can use the C2 channel to pull sensitive information from the system, credit card information, password hashes, etc. Its important to not that exfiltration of data may not be the only goal of the attack. An attacker can also disable the system, commit fraud with the system, mine crypto currencies, etc. At this point the malicious actor is in complete control of the exploited system.

3.7. Lab: Malware Analysis

The website Any Run offers free interactive malware analysis. We will be using this site to avoid the complications of running malware in a VM.

Start by visiting Any Run and registering for an account with your NJIT email address. Once you have activated your account via email, follow the tutorial to learn how to analyze threats. Use the demo-sample task provided by Any Run. Follow the prompts and watch how the process tree changes. Feel free to take your time, even after the time expires you will still be able to look at the running processes and analyze HTTP Requests, Connections, DNS Requests, and Threats.

For this lab we are going to look at an example of Emotet, a banking Trojan discovered in 2014.

On the left-hand side of the Any Run site, click on Public tasks and search for the md5 sum 0e106000b2ef3603477cb460f2fc1751.

Choose one of the examples (there are three) and look through the screenshots to get an idea of how the malware is run.

It may also help to glance at the network traffic processes.

Run the VM live by clicking Restart in the upper right-hand corner. Perform the actions necessary to trigger the malware, adding time as needed. Finally open notepad on the VM, type in your name, and take a unique screenshot.

|

Submit a unique screenshot of your VM |

Use the Any Run tools to analyze the malware you chose.

|

Answer the following questions in the text box provided:

|

3.8. Review Questions

-

Why might an APT choose to use fileless malware as opposed to malware that runs from a file on a machine?

-

What is an IoC? Give an example.

-

What is phishing? What are the five types of phishing? Give an example of each type.

4. Protocols

Protocols can be though of as rules that dictate communication. A protocol may include information about the syntax used, error correction, synchronization, or any other aspect of how communication occurs in the context of that situation. In computer security it is important to have a thorough understanding of common protocols as their weaknesses often determine how and if an attack will occur. Protocols exist for both hardware and software and have been developed via individuals and organizations. Early networking protocols were often developed on mailing lists using Requests for Comments (RFCs). You may still see RFCs being crafted, referred to, or actively worked on. Some of the earliest web protocols are detailed in RFCs. More often than not, large protocols have working groups and associations developing, such as the 802.11 group at the Institute of Electrical and Electronics Engineers (IEEE) which handles WiFi protocols. These groups publish papers detailing how the protocols work.

This chapter will give a brief description of important protocols following the TCP/IP layering model. It is important to note that some of these protocols may reach across layers to accomplish tasks. In this case they will be grouped according to which layer they largely function within.

4.1. Network Access Layer

4.1.1. ARP

Address Resolution Protocol (ARP) is used on the local ethernet segment to resolve IP addresses to MAC addresses. Since this protocol functions at the ethernet segment level, security was not a primary concern. Unfortunately this means that ARP communications can be easily spoofed to cause a MitM scenario. A malicious actor simply sends out several ARP packets, gratuitous arp, saying that traffic for a certain IP address should be sent to them. Since the MAC to IP address table is cached in several places, it can take a long time for all the caches to invalidate and resolve an issue caused by malicious ARP frames.

There is a protocol designed to mitigate the issues with ARP. Dynamic ARP Inspection (DAI) reaches across layers to work with the DHCP lease database and drop packets that are not using the MAC address used when a DHCP lease was granted. While this can solve many of the issues associated with ARP it is also a good practice to use secure higher-level protocols such as HTTPS just in case.

4.1.2. Wifi

The Wifi protocols we are most concerned with are the security standards used to encrypt data. By the nature of a wireless protocol, information sent on the network is available to anyone with an antenna. These Wifi security standards are the only thing protecting your network traffic from being viewing by anyone within your transmitting range. There are currently four standards:

- WEP

-

Wireless Equivalent Privacy (WEP) is depreceated and should not be used. It was developed in 1999 and uses an RC4 stream and 24-bit encryption. Several attacks have been developed that can crack WEP within a matter of seconds.

- WPA

-

Wifi Protected Access (WPA) utilized Temporal Key Integrity Protocol (TKIP) to change the keys being used. This 128-bit encryption method has also been cracked and the protocol should not be used.

- WPA2

-

Wifi Protected Access 2 (WPA2) makes use of AES encryption and is currently the most popular standard. WPA2 is still considered secure.

- WPA3

-

Wifi Protected Access 3 (WPA3) was developed in 2018 and is currently considered state-of-the-art. Many networks are beginning the transition from WPA2 to WPA3.

4.2. Internet Layer Protocols

4.2.1. IP

IP stands for internet protocol and it was devised to allow creating a network of networks. The network of networks that uses it primarily is the Internet, although you could use IP in other scenarios as well. IP is largely concerned with routing traffic across and to networks. The protocol was first detailed by the IEEE in 1974 and comes from the Advanced Research Projects Agency Network (ARPANET) project, which created the first large, packet-switched network.

Most people are familiar with IP addresses, the unique number given to a host participating in an IP network. Currently there are two main versions of the IP protocol, IPv4 and IPv6, and one of the major differences is in how many IP addresses are available. IPv4 supports 32 bit addresses and IPv6 supports 128 bit addresses. To give an idea of how big of a change that is, we have currently allocated all possible IPv4 addresses, but with IPv6 we could give an address to every grain of sand on the beaches of earth and still not run out.

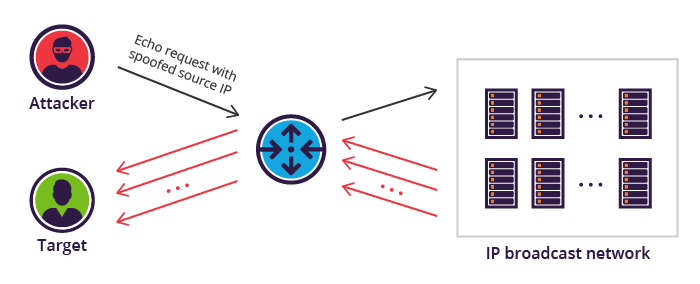

4.2.2. ICMP

Internet Control Message Protocol (ICMP) is largely used to send messages between systems when IP doesn’t work. For example, lets say we tried to connect to a host but our router doesn’t know how to get there. Our router can send us an ICMP Destination Unreachable message to let us know that something is going wrong. Because ICMP messages work at the network layer, we will receive this message even if there is an issue with the internet layer.

The most common use for ICMP is the ping command.

ping sends an ICMP echo request to check to see if a host is up.

By responding to the request with the data included in the request we can assume that the host is up and functioning.

ICMP is also used in the traceroute command.

traceroute incrementally increase the Time To Live (TTL) field of ICMP packets and watches for TTL Exceeded messages to determine what route packets are taking to get to a host.

Example traceroute output is shown below:

traceroute to 8.8.8.8 (8.8.8.8), 30 hops max, 60 byte packets

1 ryan.njitdm.campus.njit.edu (172.24.80.1) 0.217 ms 0.200 ms 0.252 ms

2 ROOter.lan (192.168.2.1) 5.790 ms 5.765 ms 6.275 ms

3 * * * (1)

4 B4307.NWRKNJ-LCR-21.verizon-gni.net (130.81.27.166) 19.166 ms 19.144 ms 21.097 ms

5 * * * (1)